How we created and implemented Ethical Guidelines to help our teams be more human-centric

At DiUS, we have a strong focus on exposing our people to new and emerging technologies. We do this because of our insatiable desire to explore the new and tinker with what might be.

We hold Special Interest Groups at least twice a week on a variety of topics. And are encouraged to attend meetups, conferences and workshops. We also hold in-house training sessions run by our people, for our people to share what we know.

Our Experience Design team holds a two day XD Strategy Session twice a year to discuss whatever theme is most relevant and impactful to the business and our customers at the time.

At one of our more recent sessions we discussed Artificial Intelligence (AI). We selected this theme because it was becoming increasingly important for us to understand the implications it might have on our discipline. We had a lot of questions around what tools and methods we would need and how our roles would change and evolve in order to contribute to AI projects.

The unconference approach to our XD Strategy Session

The XD Strategy Session kicked off with an info session, which included guest speakers who provided the inspiration that would fuel our discussions about all things AI at DiUS. We invited our most senior Machine Learning (ML) experts to talk us through the various projects they had been involved in and also our partnership and client relationship leads who provided insight into the types of questions our clients have about AI.

The info session fueled our discussions for the remainder of the day. We took the unconference approach and popped a range of discussion topics on a wall and prioritised them along the timeframes that would fill the next 10 hours of discussions and planning.

One of the topics we were particularly interested in and decided to explore was:

How might we ensure that all AI products we create at DiUS consider the ethical implications that protect and respect the end user?

Having an individual who is user centric and knowledgeable in ethical considerations (often an Experience Designer) included on all AI projects is our preferred approach. However, it’s not always possible due to resourcing constraints, limited budgets and the experimental nature of many AI initiatives. So, we decided to create a set of Ethical Guidelines that would champion the voice of the user, even if we couldn’t be part of every AI project.

For us, these Ethical Guidelines are a starting point and we will continue to build on them and refine them as we gain new insights into the world of AI.

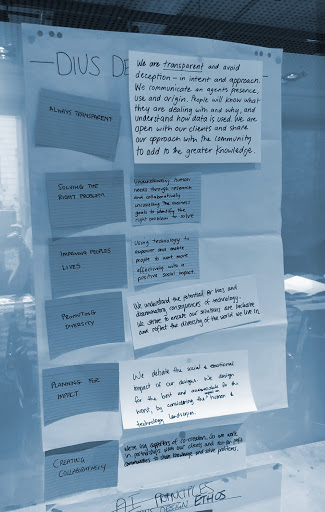

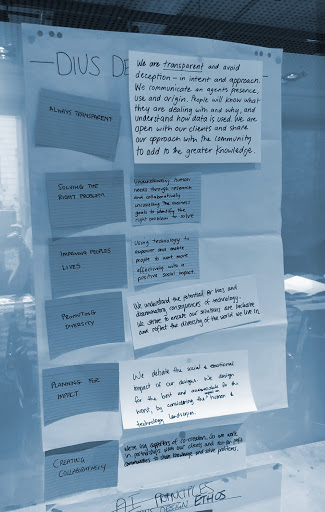

Always be transparent

We are transparent in our engagement with clients and their customers. The purpose and identity of an AI-powered system is communicated clearly so that the end user is aware of its presence and understands how their data will be used.

Improve people’s lives

We believe in the power of AI to augment work processes , improve the efficiency of systems and predict events and actions that enable people to live better lives. We believe this must be approached with human direction, monitoring and reviewing processes to ensure systems continue to improve human outcomes over time. Where AI systems automate functions previously done by people, we will work with the business to deliver strategies and programmes to mitigate the human impact.

Promote diversity

We understand the potential for AI systems to be inadvertently corrupted with bias and discrimination. By understanding the potential biases and unintended intentions of the AI system, we are able to proactively avoid this and monitor outcomes before and after deployment to identify and amend any issues. AI systems must be representative of all people and cultures, without bias, and accommodate the different laws and policies, social and cultural factors around the world.

Plan for impact

We use research to predict and analyse the social and emotional impact of technologies, and use that research in constructive planning to educate and support.

Create collaboratively

We believe in using human centred design methods to collaborate with the client, the client’s target market, and understand the problem space in order to co-create solutions. This ensures we understand the problem and its landscape, actively evaluate biases early, and analyse human processes to identify benefits of human augmentation while planning for impact and building empathy into the technology that we create.

But how do you implement Ethical Guidelines?

It’s all very well and good to have a set of Ethical Guidelines, however the challenge is ensuring that they are actually implemented. These are just some of the ways that worked for us.

Creating Advocacy

Spread the word! Educate and inform. People will only embrace change if they can see the true value in what they have been asked to do. This means you’ll need to present a case to your team which encourages them to see the value in implementing the Ethical Guidelines and also provide a post mortem of what might happen if they don’t.

Include them as part of the workflow

Provide the team with examples of when they should be used. If you blend them into their workflow and processes there will be less resistance in adoption. For example include a 20-30 minute activity during project kick-off or discoveries and also run through them in retros.

Assign a custodian

Assign a person within your organisation who is the ‘go to’ for all things ethics related. This is the person they can go to if they have questions and feedback. This person should touch base with each AI project team at the end to review their usage and to make any iterations to the guidelines if certain elements are not working or missing.

Discuss the impact on users at the start of a project

Everything that is created by a human is typically for a human. This means that there is always some sort of impact on the target user, this impact is usually positive, however there are times when the solution can impact users in negative ways. Discussions on how a project will add value or impact a user should happen at the start of a project. Introducing the Ethical Guidelines here is highly recommended!

Review and continuously improve

The custodian should gather feedback from teams regularly and modify and edit the Ethical Guidelines. We would recommend that they be revised at least once a year, to either edit the existing guidelines and perhaps even add new ones that may have been missed.

Do I need to create my own ethical guidelines?

To get started fast, we recommend that you use the guidelines in this blog post. However, you can also check out some of the ones that organisations such as the Department of Industry, Science, Energy and Resources, Google and the European Commision have created.

If you decide to create your own, we suggest you take a collaborative approach and set up a workshop with a diverse set of mindsets and roles in the room, keep it to less than 6 people if possible. Provide them with existing examples as a starting point and modify them to your own needs.

What next?

Remember, everything that we create has an end user in mind. It’s imperative that we apply a human lense to everything and consider the ethical implications, empathy and effect that the products and services that we create have on the world’s inhabitants. It’s easy to get caught up in the excitement of new technological innovations and forget about the impact we may be having on others. So please take these Ethical Guidelines and use them to create a better experience for your users.