In the fast-paced digital age, the demands on modern applications have grown exponentially. Users expect real-time updates, seamless interactions, and robust scalability. To meet these evolving needs, software engineers and architects have turned to event-driven architectures (EDA) as a game-changing paradigm. In this blog post, I will explore the concept of EDAs, the benefits and reasons behind their rise in popularity.

Understanding event-driven architectures

EDA is a software design pattern that enables communication between different components or services in a system through the use of events. In this architecture, the flow of data and the triggering of actions are based on the occurrence of events rather than traditional synchronous communication between components. An EDA has four key concepts:

1. Event

Events are messages or signals that represent occurrences or changes in the system. They convey information about a specific activity that has happened or a state that has been updated.

2. Event producers

These are components or services responsible for generating events when specific actions or changes occur within the system. Event producers emit events, making them available for other components to process.

3. Event consumers

Event consumers are components or services that listen for and process events. When an event is produced, the relevant event consumers can react to it by performing specific actions, updating their state, or triggering further events.

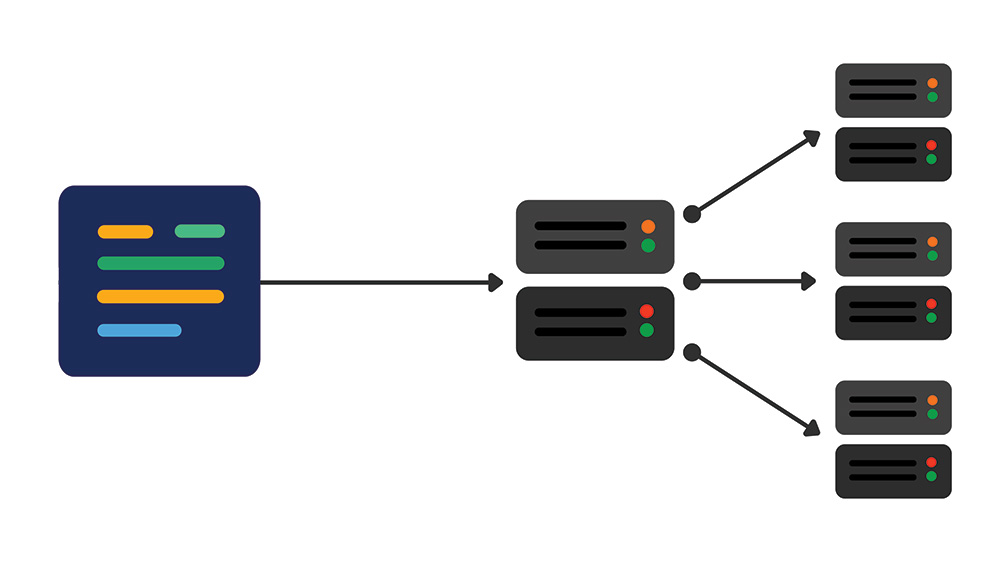

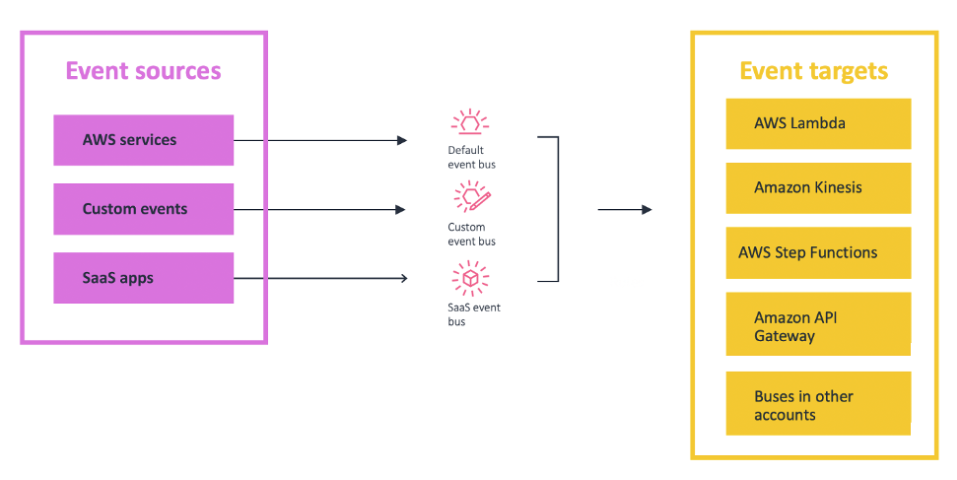

4. Event bus/router

At the core of an EDA lies the event bus or router, which acts as a central hub for distributing events to interested consumers. It is responsible for receiving events from producers and distributing them to the appropriate consumers. The event bus facilitates decoupling between producers and consumers, as each doesn’t need to know about the existence of the other.

Why event-driven architectures are on the rise

Scalability and decoupling

Traditional monolithic architectures often suffer from scalability issues and tight coupling between components, making them difficult to scale and maintain. On the other hand, EDAs promote loose coupling, allowing components to communicate asynchronously. This decoupling enables more straightforward scaling of individual components, making the system as a whole more agile and adaptable to changing demands. The asynchronous nature of events enables systems to handle a high volume of events without blocking or slowing down processes, leading to improved performance and responsiveness.

Flexibility and extensibility

New functionalities can be added by simply introducing new event producers or consumers, without modifying the existing components. Enabling development with agility. The router also removes the need for heavy coordination between producer and consumer services, speeding up your development process.

Enhanced fault tolerance and resilience

In traditional request-response systems, a failure in one component can lead to a domino effect, causing the entire system to crash. EDAs, however, handle failures gracefully. If a component fails to process an event, the event broker can retry or forward the event to another appropriate consumer, ensuring fault tolerance and system resilience.

Evolving data landscape

As data becomes more diverse, complex, and voluminous, traditional approaches to handling data are proving inadequate. EDAs provide an elegant solution for managing and processing large streams of data from various sources. With tools like Apache Kafka and RabbitMQ, developers can handle data efficiently, perform real-time analytics, and derive valuable insights from the event streams.

A case study of transformation from a request-response architecture to an event-driven architecture

At DiUS, we specialise in assisting organisations with re-architecting their systems to align with the ever growing demands of the market. Recently, we partnered with an edtech client to expand their existing systems, empowering them to harness the potential of data analytics and gain real-time insights.

Our client operates services that gather wellbeing survey responses from students and teachers worldwide. They also offer data insight services to retrospectively analyse survey results and predict trends, issuing warnings and alerts to raise awareness if wellbeing survey outcomes fall below defined thresholds. In less than two months, we seamlessly extended their existing systems by implementing an EDA to stream survey results into a newly developed data analytics platform, all while maintaining a seamless integration with their legacy systems.

The Challenges

- Time constraints – MVP to go live in two months: The pressure to deliver a Minimum Viable Product (MVP) within a tight timeline required a streamlined approach that balances speed with the need for a robust foundation.

- Extensibility for future analytical requirements: A forward-thinking solution needed to accommodate future analytical needs without necessitating a complete overhaul of the architecture.

- Scalability: The system’s ability to handle surges in user activity while remaining cost-effective during quieter periods was essential for consistent performance and resource management.

- Decoupling from legacy systems: Striking the right balance between modernising the system and maintaining interoperability with legacy components was pivotal for a smooth transition and eventual retirement of legacy systems.

- Fault tolerance and resilience: Ensuring data integrity and swift auto-recovery mechanisms were non-negotiable in guaranteeing an uninterrupted service, even during unforeseen disruptions.

Exploring the Options

- Building a brand new platform: Creating an entirely new platform from the ground up offers a clean slate, but it could require substantial development effort and time, potentially affecting the MVP deadline.

- Utilising DynamoDB and ElasticSearch: Leveraging existing technologies like DynamoDB and ElasticSearch for analytics could provide quick wins, but it might not address all extensibility and scalability requirements.

- Combining DynamoDB with RDS: The approach of using DynamoDB for frontend data while streaming analytics data into RDS introduces a hybrid model that seeks to balance familiarity with innovation.

We decided to go with the option 3 “Combining DynamoDB with RDS”.

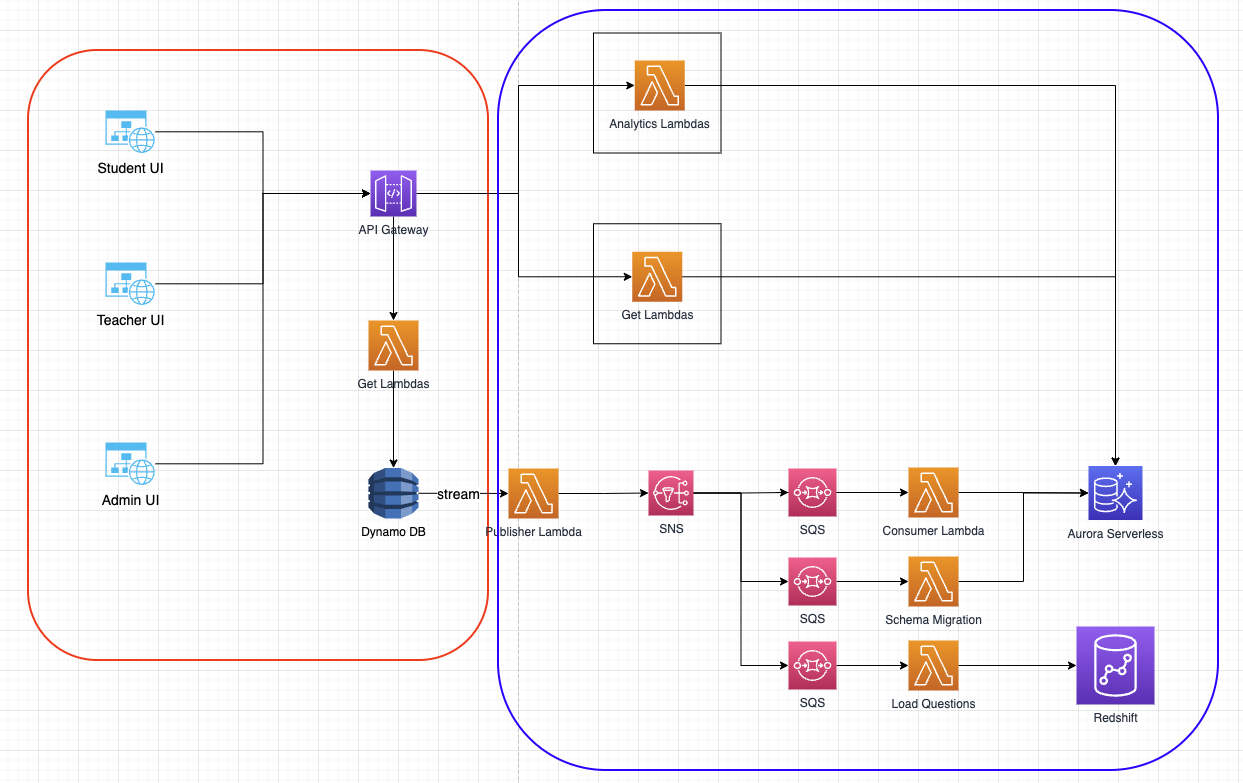

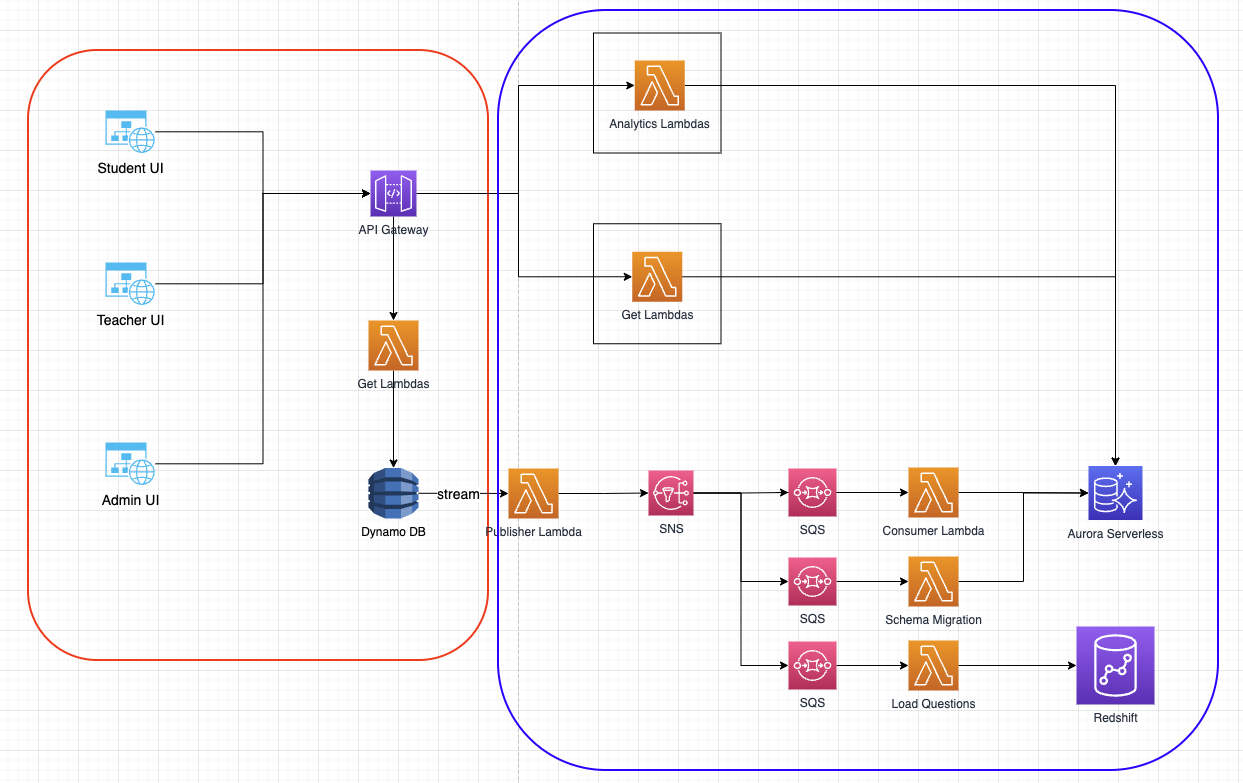

The accompanying graph (below) illustrates the original system architecture, supplemented with the EDA for data streaming into the analytics platform. The red box signifies the pre-existing request-response based architecture, employing DynamoDB as the database, which, though unsuitable for analytics needs, remains unchanged. The components within the blue box were introduced to fulfil the requirements of providing data insights to both customers and for internal analytics purposes.

The adoption of an event-driven architecture bestows unparalleled flexibility and scalability, especially during peak periods at the beginning of each semester when the influx of survey submissions from students and teachers surges. Subsequently, the system automatically scales down after peak times, optimising costs. The event-driven design enables a streamlined data flow into the analytics platform without necessitating fundamental alterations to the existing DynamoDB component.

The Pros

- Efficient MVP delivery: Opting for a hybrid approach allows for the swift creation of an MVP without the need to re-architect the entire platform.

- Future-proofing: By introducing the capability to communicate with RDS directly, this architecture paves the way for retiring DynamoDB in due course and embracing a more scalable and cost-effective solution.

- Decoupling via SQS and SNS: By employing messaging services like SQS and SNS, the architecture ensures services are decoupled, facilitating communication flexibility while enabling fan-out for multiple recipients when necessary.

- Scalability with event-driven architecture: Implementing an event-driven architecture (EDA) empowers the platform to gracefully scale to accommodate a high volume of requests concurrently, a critical factor in today’s user-centric applications.

- Data integrity through dead letter queues: The presence of dead-letter queues provides a mechanism for retries and preserves unprocessed data, bolstering data integrity and facilitating timely alerts and investigations.

The Cons

- Increased complexity: A hybrid architecture can introduce complexities, potentially requiring additional effort for integration and maintenance.

- Debugging and troubleshooting challenges: The distributed nature of the system may make pinpointing issues more intricate, necessitating a comprehensive debugging and troubleshooting strategy.

- Monitoring difficulty: Monitoring a system with diverse components can be challenging, requiring robust monitoring tools and practices to ensure each aspect’s health and performance is upheld.

Conclusion

As the digital landscape continues to evolve, EDAs have emerged as a powerful solution to meet the demands of real-time responsiveness, scalability, and fault tolerance. Their compatibility with microservices, cloud environments, and modern data processing technologies has further fueled their popularity.

While EDAs may not be suitable for every use case, their advantages in various domains such as financial services, gaming, retail and social media make them an increasingly vital tool in the arsenal of software engineers and architects. By embracing EDAs, organisations can future-proof their applications and stay competitive in a dynamic and fast-paced digital world.